CSRD 2026: Why Your ESG Checklist is an Audit Trap

The Illusion of “Compliance”

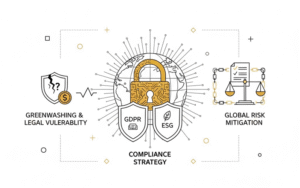

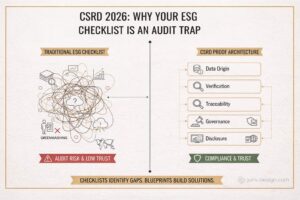

Most global organizations are currently transitioning to CSRD (Corporate Sustainability Reporting Directive) using control lists (checklists). While they are excellent for identifying weaknesses, they are dangerous as the foundation for building solutions.

As we approach the 2026 reporting cycle, the focus must shift from “Reporting” to “Proof Architecture”. If your ESG data lacks a defensible system in the background, your report is not a strategy — it is a liability and a legal exposure.

A checklist tells you where you are vulnerable. It does not tell you what you need to build.

I. Shifting from Narrative to Architecture

Historically, ESG has existed within marketing and communications. CSRD has moved it to the desk of the Chief Financial Officer (CFO) and General Counsel. Regulators are no longer interested in your “sustainability story”; they are interested in your data lineage.

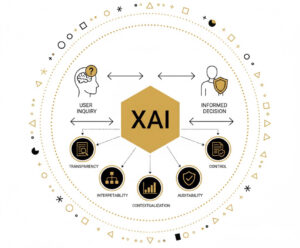

Global standards (ESRS) now require:

- Auditability: Every figure must be verifiable by a third party.

- Traceability: A clear digital path from source to table.

- Accountability: Board-level signatures on non-financial data.

These are not narrative requirements — they are structural requirements.

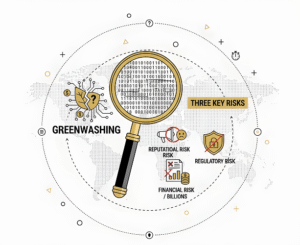

II. Why Global Reports Fail Audit Review

Even companies with long ESG reporting history are increasingly facing situations where auditors reject or conditionally approve their reports. Failure rarely lies in the targets themselves — the problem is in the infrastructure.

Common failure points include:

- “Orphaned” data: Numbers delivered via email with no timestamp or source origin.

- Black-box methodologies: Calculations (such as Scope 3 emissions) with no documented logical trail.

- Governance gaps: ESG data that exists in isolated silos, disconnected from the company’s legal and financial control framework.

The problem is not the content — the problem is the architecture that produces it.

III. The Blueprint: ESG as a System, Not a Document

To pass assurance with limited or reasonable confidence, ESG must be structured as a five-layer defense system:

- Data Origin: Direct data sources (ERP, IoT) replacing manual estimates.

- Verification: Automated logical controls that detect anomalies before they reach the report.

- Traceability: A digital “pedigree” for every data point.

- Governance: Formal ownership of data and clearly assigned legal risk.

- Disclosure: Transformation of raw inputs into machine-readable XBRL formats for global regulators.

Without these five layers, your ESG report is simply a collection of claims that cannot be defended in court or at a board meeting.

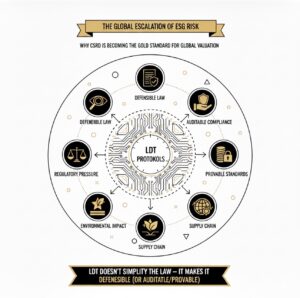

IV. The Fracture Point: Supply Chain

For global entities, CSRD breaks in the supply chain. A single key supplier without a verifiable data system can compromise the report of an entire Group.

Your architecture must extend beyond your internal systems. The Blueprint applies equally to standardized supplier inputs as it does to your internal ERP.

V. Blueprinting vs Implementation

A Blueprint is not your IT software, nor your legal advisor. It is the Master Plan that directs them.

Without a Blueprint:

- Costs escalate: You purchase software that “does not speak” to your auditors.

- Complexity paralyzes: Departments operate in silos, creating redundant data.

- Risk remains hidden: Gaps surface only when the auditor asks the first question.

CSRD compliance is not a reporting exercise. It is a systems-design challenge. In the regulatory environment of 2026, the rule is simple: If you cannot prove it — you cannot defend it.

As a practical extension of this article, I have prepared the ESG Proof Architecture.

Download ESG PROOF ARCHITECTURE GLOBAL

Other blogs

CSRD 2026: Why Your ESG Checklist is an Audit Trap

Most global organizations are currently transitioning to CSRD (Corporate Sustainability Reporting Directive)...

CSRD 2026 BLUEPRINT — From ESG Reporting to Proof Architecture

Most companies enter 2026 with a list of obligations. The problem is that the checklist...